Methodology: performance tests

At the eleventh hour, but still. The long-awaited Ryzen 7 5700X is here. However, we won’t be writing about the successor to the Ryzen 7 3700X as a significantly cheaper alternative to the Ryzen 7 5800X. The new octa-core Ryzen 7 5700X is primarily more economical compared to the higher-end model. Its power draw is just half in some tasks, which means that temperatures are also significantly lower.

Gaming tests

We test performance in games in four resolutions with different graphics settings. To warm up, there is more or less a theoretical resolution of 1280 × 720 px. We had been tweaking graphics settings for this resolution for a long time. We finally decided to go for the lowest possible (Low, Lowest, Ultra Low, …) settings that a game allows.

One could argue that a processor does not calculate how many objects are drawn in such settings (so-called draw calls). However, with high detail at this very low resolution, there was not much difference in performance compared to FHD (which we also test). On the contrary, the GPU load was clearly higher, and this impractical setting should demonstrate the performance of a processor with the lowest possible participation of a graphics card.

At higher resolutions, high settings (for FHD and QHD) and highest (for UHD) are used. In Full HD it’s usually with Anti-Aliasing turned off, but overall, these are relatively practical settings that are commonly used.

The selection of games was made considering the diversity of genres, player popularity and processor performance requirements. For a complete list, see Chapters 7–16. A built-in benchmark is used when a game has one, otherwise we have created our own scenes, which we always repeat with each processor in the same way. We use OCAT to record fps, or the times of individual frames, from which fps are then calculated, and FLAT to analyze CSV. Both were developed by the author of articles (and videos) from GPUreport.cz. For the highest possible accuracy, all runs are repeated three times and the average values of average and minimum fps are drawn in the graphs. These multiple repetitions also apply to non-gaming tests.

Computing tests

Let’s start lightly with PCMark 10, which tests more than sixty sub-tasks in various applications as part of a complete set of “benchmarks for a modern office”. It then sorts them into fewer thematic categories and for the best possible overview we include the gained points from them in the graphs. Lighter test tasks are also represented by tests in a web browser – Speedometer and Octane. Other tests usually represent higher load or are aimed at advanced users.

We test the 3D rendering performance in Cinebench. In R20, where the results are more widespread, but mainly in R23. Rendering in this version takes longer with each processor, cycles of at least ten minutes. We also test 3D rendering in Blender, with the Cycles render in the BMW and Classroom projects. You can also compare the latter with the test results of graphics cards (contains the same number of tiles).

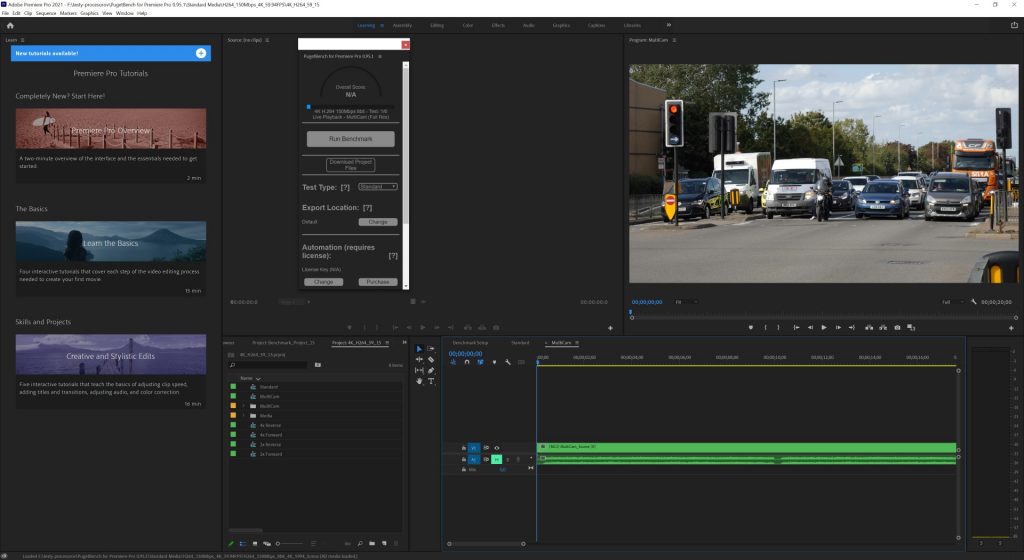

We test how processors perform in video editing in Adobe Premiere Pro and DaVinci Resolve Studio 17. We use a PugetBench plugin, which deals with all the tasks you may encounter when editing videos. We also use PugetBench services in Adobe After Effects, where the performance of creating graphic effects is tested. Some subtasks use GPU acceleration, but we never turn it off, as no one will do it in practice. Some things don’t even work without GPU acceleration, but on the contrary, it’s interesting to see that the performance in the tasks accelerated by the graphics card also varies as some operations are still serviced by the CPU.

We test video encoding under SVT-AV1, in HandBrake and benchmarks (x264 HD and HWBot x265). x264 HD benchmark works in 32-bit mode (we did not manage to run 64-bit consistently on W10 and in general on newer OS’s it may be unstable and show errors in video). In HandBrake we use the x264 processor encoder for AVC and x265 for HEVC. Detailed settings of individual profiles can be found in the corresponding chapter 25. In addition to video, we also encode audio, where all the details are also stated in the chapter of these tests. Gamers who record their gameplay on video can also have to do with the performance of processor encoders. Therefore, we also test the performance of “processor broadcasting” in two popular applications OBS Studio and Xsplit.

We also have two chapters dedicated to photo editing performance. Adobe has a separate one, where we test Photoshop via PugetBench. However, we do not use PugetBench in Lightroom, because it requires various OS modifications for stable operation, and overall we rather avoided it (due to the higher risk of complications) and create our own test scenes. Both are CPU intensive, whether it’s exporting RAW files to 16-bit TIFF with ProPhotoRGB color space or generating 1:1 thumbnails of 42 lossless CR2 photos.

However, we also have several alternative photo editing applications in which we test CPU performance. These include Affinity Photo, in which we use a built-in benchmark, or XnViewMP for batch photo editing or ZPS X. Of the truly modern ones, there are three Topaz Labz applications that use AI algorithms. DeNoise AI, Gigapixel AI and Sharpen AI. Topaz Labs often and happily compares its results with Adobe applications (Photoshop and Lightroom) and boasts of better results. So we’ll see, maybe we’ll get into it from the image point of view sometime. In processor tests, however, we are primarily focused on performance.

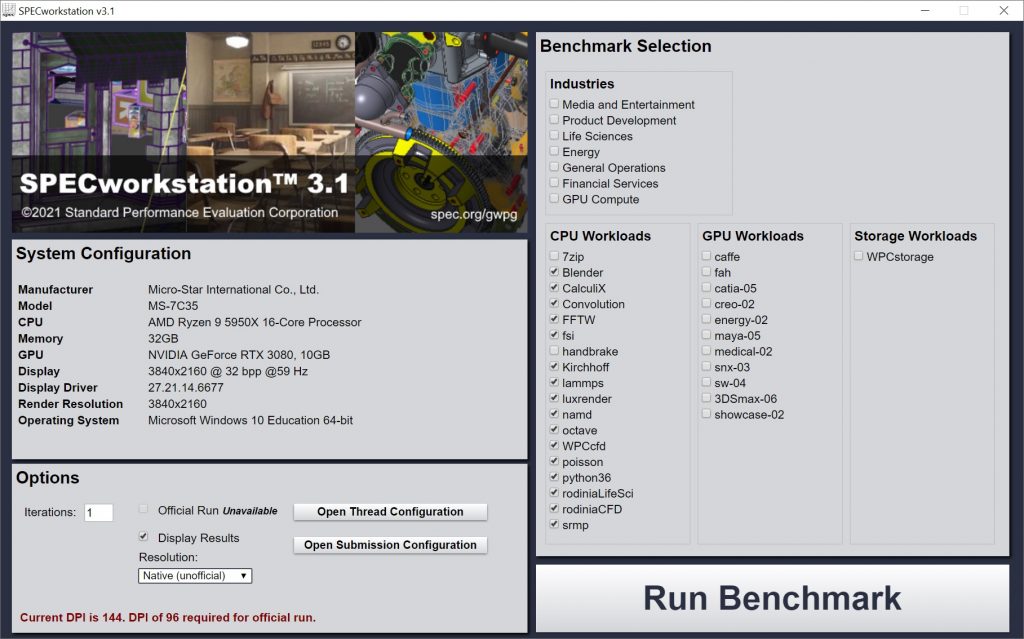

We test compression and decompression performance in WinRAR, 7-Zip and Aida64 (Zlib) benchmarks, decryption in TrueCrypt and Aida64, where in addition to AES there are also SHA3 tests. In Aida64, we also test FPU in the chapter of mathematical calculations. From this category you may also be interested in the results of Stockfish 13 and the number of chess combinations achieved per unit time. We perform many tests that can be included in the category of mathematics in SPECworkstation 3.1. It is a set of professional applications extending to various simulations, such as LAMMPS or NAMD, which are molecular simulators. A detailed description of the tests from SPECworkstation 3.1 can be found at spec.org. We do not test 7-zip, Blender and HandBrake from the list for redundancy, because we test performance in them separately in applications. A detailed listing of SPECWS results usually represents times or fps, but we graph “SPEC ratio”, which represents gained points—higher means better.

Processor settings…

We test processors in the default settings, without active PBO2 (AMD) or ABT (Intel) technologies, but naturally with active XMP 2.0.

… and app updates

The tests should also take into account that, over time, individual updates may affect performance comparisons. Some applications are used in portable versions, which are not updated or can be kept on a stable version, but this is not the case for some others. Typically, games update over time. On the other hand, even intentional obsolescence (and testing something out of date that already behaves differently) would not be entirely the way to go.

In short, just take into account that the accuracy of the results you are comparing decreases a bit over time. To make this analysis easier for you, we indicate when each processor was tested. You can find this in the dialog box, where there is information about the test date of each processor. This dialog box appears in interactive graphs, just hover the mouse cursor over any bar.

- Contents

- AMD Ryzen 7 5700X in detail

- Methodology: performance tests

- Methodology: how we measure power draw

- Methodology: temperature and clock speed tests

- Test setup

- 3DMark

- Assassin’s Creed: Valhalla

- Borderlands 3

- Counter-Strike: GO

- Cyberpunk 2077

- DOOM Eternal

- F1 2020

- Metro Exodus

- Microsoft Flight Simulator

- Shadow of the Tomb Raider

- Total War Saga: Troy

- Overall gaming performance

- Gaming performance per euro

- PCMark and Geekbench

- Web performance

- 3D rendering: Cinebench, Blender, ...

- Video 1/2: Adobe Premiere Pro

- Video 2/2: DaVinci Resolve Studio

- Graphics effects: Adobe After Effects

- Video encoding

- Audio encoding

- Broadcasting (OBS and Xsplit)

- Photos 1/2: Adobe Photoshop and Lightroom

- Photos 2/2: Affinity Photo, Topaz Labs AI Apps, ZPS X, ...

- (De)compression

- (De)cryption

- Numerical computing

- Simulations

- Memory and cache tests

- Processor power draw curve

- Average processor power draw

- Performance per watt

- Achieved CPU clock speed

- CPU temperature

- Conclusion

Nice review, especially the details in the Premiere Pro part.

However, there are some questionable results, namely the “4K H.264, 2× Forward Live Playback [avg. fps] – higher is better” and others, where the 5700X is last by a wide margin. Have you redone the tests (or ran similar tests) to see if this was just an outlier? It would have been nice to at least have a comment on such a weird result…

Thanks for your comment. The explanation is in the text of the final chapter, and I also explain this behavior in the Ryzen 5 5600 test, which performs better, but is still the second processor from the bottom in the charts. The point is that 4K H264 live playback is a single-threaded task in Premiere Pro, but because of the utilization of the other cores by other application processes, the frequencies are only at the all-core boost level. These are pretty conservative for the Ryzen 7 5700X from today’s perspective (the 5600 is 300 MHz faster here, hence the higher fps), plus Zen 3 doesn’t handle 4K H.264 well overall, and better results are achieved with both 4K ProRes 222 and 4K RED.

Thanks for replying. I read the explanation at the end of the review, but “the 500 MHz difference” still didn’t seem to be enough of a reason for such a big difference. Something else must be at play here, though I am also not sure of what it may be.

why in total war troy 5600x shows more fps than 5700x? after all, this strategy loads the processor well and, according to the logic, the 16th nuclear one should have come out with a large margin from the 12th nuclear one !! ps: I myself am a fan of total war warhammer 1/2/3 and have a 5600x processor, but yesterday I ordered a new 5700x processor

The reason for this is apparently very simple. The 6 cores/12 threads of the R5 5600X processor do not represent a bottleneck for TWST, and higher clock speeds are decisive for higher performance. And those the R7 5700X achieves are lower.

Great review, really liking the detailed analysis. I saw a comment on reddit that linked to this review stating that if the 5800x was limited to 65w TDP, the performance efficiency might be similar to the 5700x. Have you thought about testing this out?

Thanks for your comment. Due to a lot of time pressure, we probably won’t be testing the R7 5800X with reduced power draw anymore. But if we see a similar situation with the Ryzen 7000 and something like the R7 6700(X) and R7 6800, we’ll definitely take a look at it. However, it’s good to note that such analyses require multiple CPU samples to operate outside of their specs. The fact that a single 5800X sample would be limited to 65W and the performance was higher than the R7 5700X@TDP/65W doesn’t mean anything relevant yet.

It’s similar to overclocking. Based on one sample, it is not possible to generalize that this or that frequency will be achieved at xyz W of power draw. You always have to evaluate it based on multiple samples of processors and sooner or later we will get to that and once we have more of them (those test samples) we will for sure look at the variance of the measured values across the samples as well.