Last year, Nvidia introduced a feature called RTX Video Super Resolution, which uses the GPU to upscale and enhance web video with a DLSS 1.0-like filter utilising an artificial intelligence (though you can use this upscaler in VLC Media Player as well). This technology has now been extended to RTX Video HDR, which is again an AI filter that recreates (simulates) an HDR component for an ordinary video, adding high dynamic range visuals. Read more “RTX Video HDR: Nvidia’s AI gives ordinary web videos HDR look”

Tag: artificial intelligence

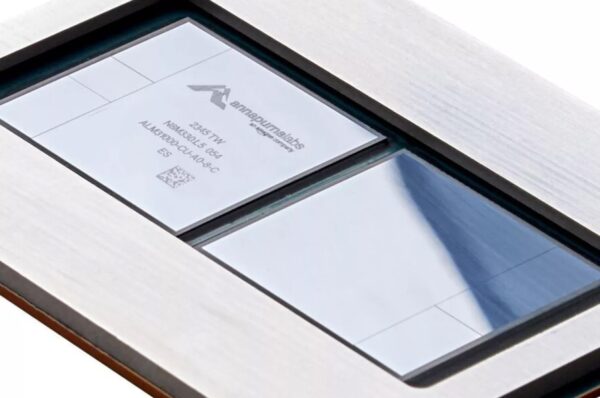

Amazon unveils 96-core ARM Graviton4 CPU and Trainium2 AI chip

Last month, Microsoft unveiled their first custom processors being developed for datacenter and Azure services. Also Amazon, which was the first of these US hyperscalers to go the custom hardware route, is now launching new CPUs for its servers. And with it Trainium2, already the second generation of an in-house developed AI accelerator. Amazon also revealed that it has already produced over two million of its CPUs. Read more “Amazon unveils 96-core ARM Graviton4 CPU and Trainium2 AI chip”

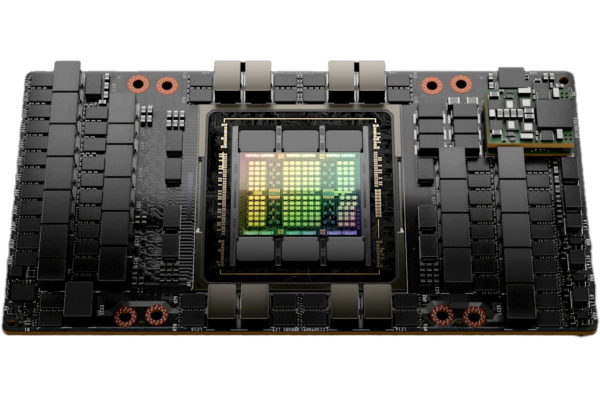

Nvidia’s new fastest AI GPU: H200 with 141GB of HBM3E memory

Last year, Nvidia launched the 4nm H100 accelerator with Hopper architecture. It has since been the company’s fastest GPU for AI. Now the company is launching its successor dubbed H200. It isn’t quite a new generation yet, but something of a refresh that will lead Nvidia’s lineup until the next generation with the Blackwell architecture is released. The H200 relies on the use of faster memory, but that should also lift overall performance. Read more “Nvidia’s new fastest AI GPU: H200 with 141GB of HBM3E memory”

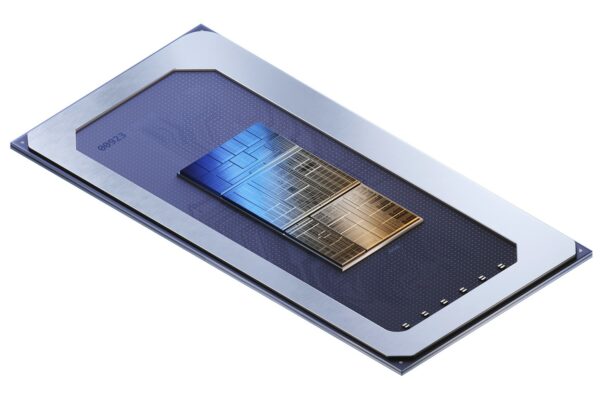

Intel unveils Meteor Lake processors: 4nm, tiles, Xe LPG graphics

Meteor Lake is Intel’s first processor manufactured on in-house 4nm node, an important milestone. It is also, paradoxically, Intel’s first processor manufactured at TSMC, as many of its parts are outsourced in this way – a milestone too. This is the first mainstream Intel processor to use chiplets (or tiles) and advanced 3D packaging. It’s almost and extra beyond that, that there are new CPU cores, new GPU, and a new NPU for AI acceleration. Read more “Intel unveils Meteor Lake processors: 4nm, tiles, Xe LPG graphics”

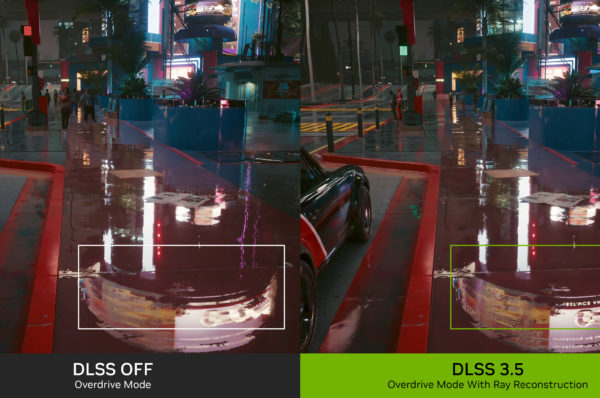

Nvidia unveils DLSS 3.5: Better ray tracing not only for RTX 4000

Nvidia has now announced a new iteration of its DLSS AI upscaling technology, following on from the third generation or DLSS 3 from last year. However, the new DLSS 3.5 is somewhat confusingly named, as it is to some extent more of a continuation of DLSS 2.x – this improvement will not depend on DLSS 3 (also referred to as Frame Generation). That means it works on older GeForce RTX 2000 and RTX 3000 generation graphics cards. Read more “Nvidia unveils DLSS 3.5: Better ray tracing not only for RTX 4000”

Microsoft preparing its own AI chips to compete with Nvidia’s GPUs

The development of artificial intelligence has gained mainstream awareness in recent months with news around ChatGPT and OpenAI and similar projects. These advanced neural networks and AI models have large hardware requirements, benefiting Nvidia, whose GPUs are used to train and run these neural networks. But this interest may also bring new competitors. Among them is reportedly Microsoft, which is preparing its own chips for AI. Read more “Microsoft preparing its own AI chips to compete with Nvidia’s GPUs”

Latest comments