SSHDs are dead, long live hard drives using NAND for smarter purposes

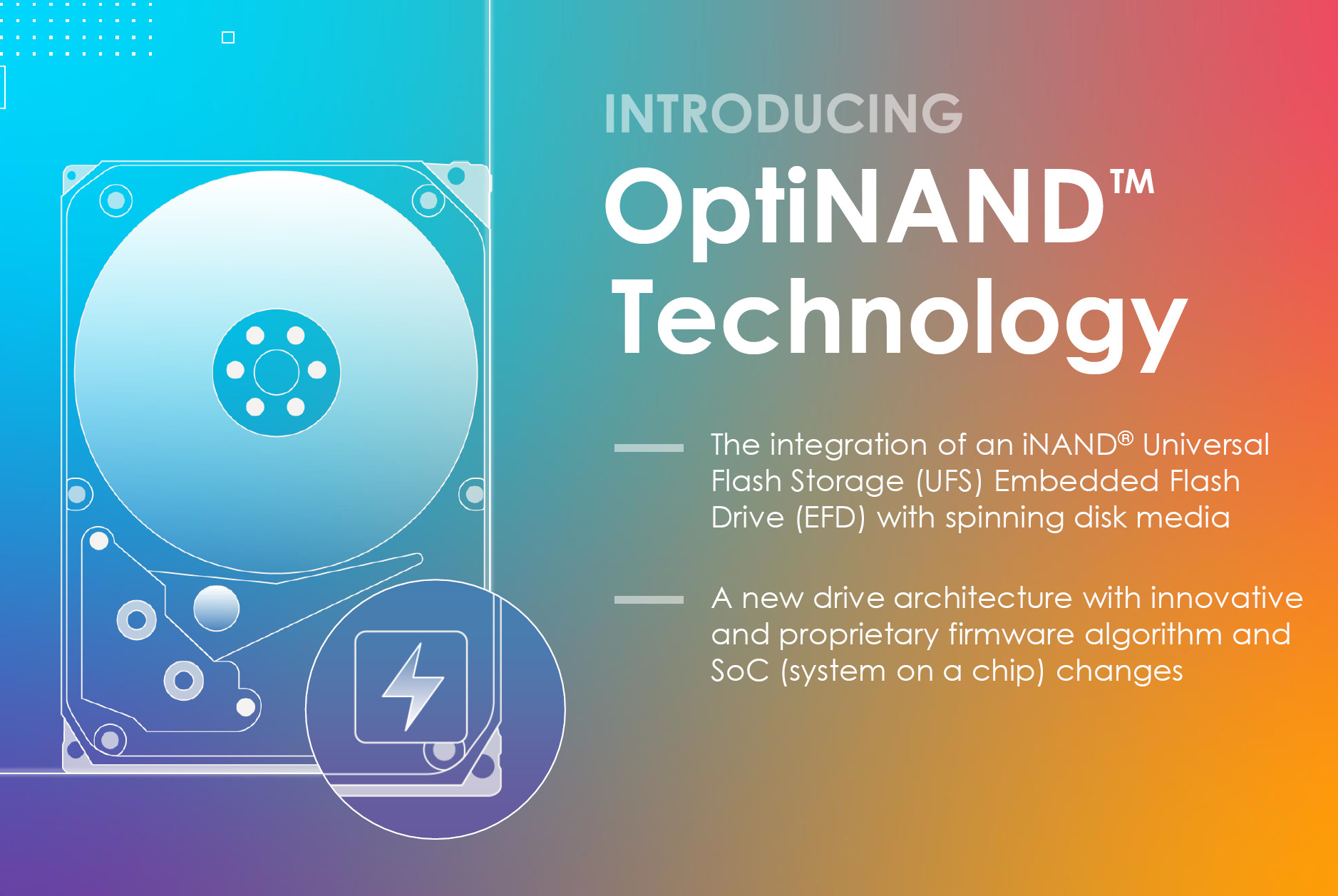

Lately (actually for several years now) we’ve seldom had news about hard disks. An opportunity to write about a new innovation in this area has come now though. Western Digital has introduced OptiNAND technology, which improves the performance of mechanical drives by integrating NAND Flash memory – but not in the way of SSHDs, which only used it for caching. OptiNAND serves other purposes, and it could be much more useful.

OptiNAND involves adding flash storage to the PCB of the electronics drive using the standard UFS interface solution, otherwise used as storage in mobile devices (WD has branded it iNAND). Unlike earlier SSHDs however, it is not user data that is stored in this memory, but rather metadata. This allows WD to get more usable capacity on the platters themselves, but to also improve performance and, supposedly, reliability. While SSHDs have only tried (not very successfully, to be sure) to replace system SSDs in a computer, OptiNAND uses NAND to improve the function HDDs are primarily designed for, which is storing large amounts of data.

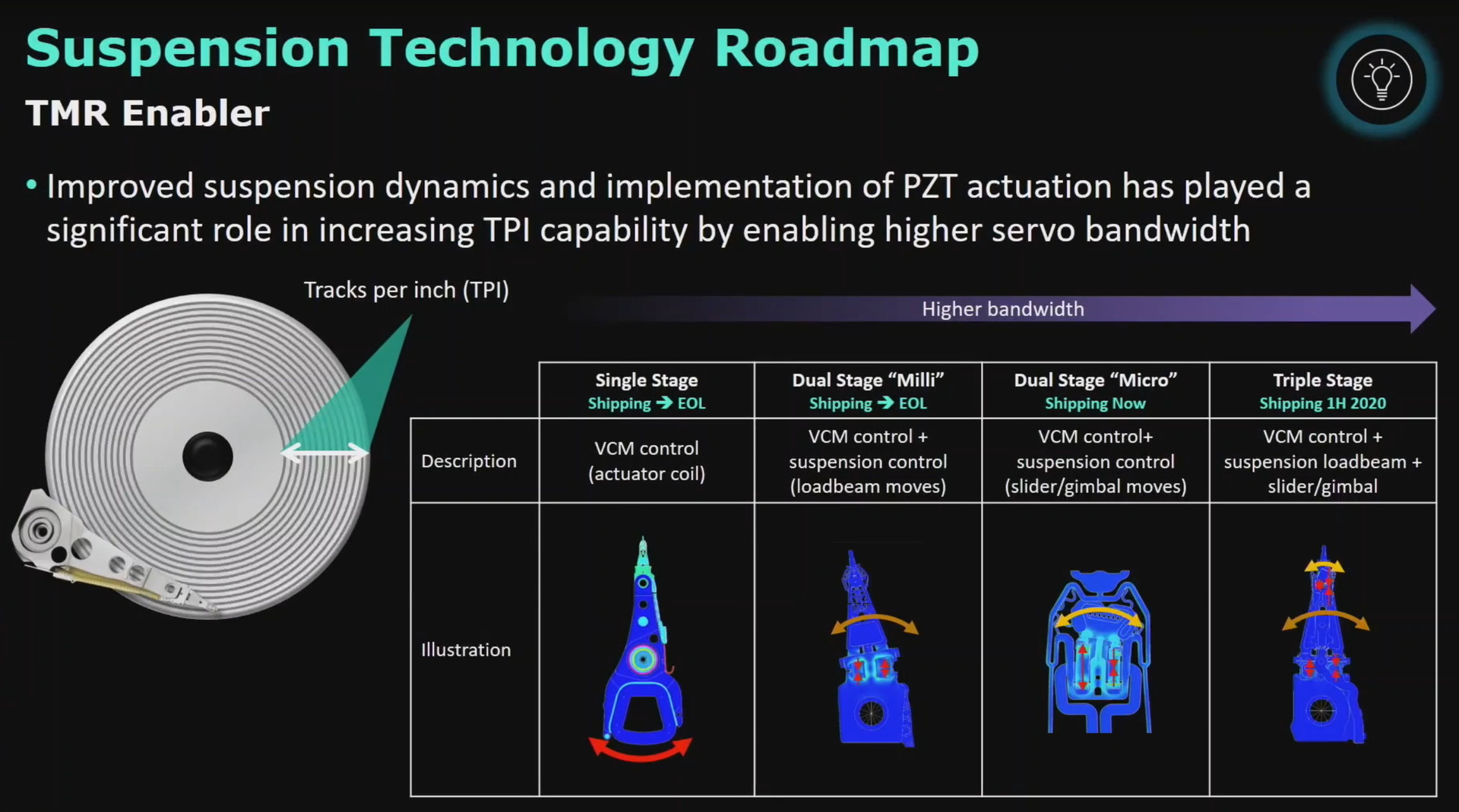

In the next generation of drives using OptiNAND technology, Western Digital will increase the density of tracks on the platter, which, incidentally, will also require the use of a three-stage control of the position of the read/write heads (the actuator of the head itself, an electronic middle stage that twists the beam to more precisely adjusts the required angle, and a miniaturized version of the same mechanism at the tip, which furhter corrects the position of the head itself).

However, large part of this increase in capacity will be achieved by eliminating the metadata that today must be stored in the tracks themselves. You may remember from earlier times that reducing the amount of extra metadata was already the reason for introducing 4KB sectors, thus saving on overhead for sector start signalling and ECC. However, there is still a lot of metadata stored on the platters, including various calibration information needed for the operation of the HDD, which is a technologically very complex and delicate device. For example, the so-called Repeatable Run Out (RRO) information, which is loaded onto the drive during factory calibration and reaches gigabytes in size.

Moving this and other metadata to NAND, on the other hand, saves space in the tracks themselves, so the platter can hold more user data even with the technology unchanged and data density maintained. In addition, the work of the mechanical part of the drive should be simplified, whereby it will not be necessary to read the stored metadata first during the actual read and write operations, since the drive firmware/electronics will take it independently from the built-in UFS flash storage.

Track refresh bottleneck

Moving the metadata to the NAND storage space itself is intended to enable one more improvement. HDD technology must ensure that the magnetic recording to one track does not cause interference to data already written to neighbouring tracks. To be more precise, this inevitably happens, so the drive’s electronics must occasionally read the already written data and write it again if the adjacent tracks have been changed multiple times and their magnetization repeatedly weakens the signal level of the track in question (a similar mechanism to the RowHammer attack in DRAM – incidentally, NAND suffers from this as well, so there’s no escaping this through switching to an SSD).

As mentioned, HDDs can handle this phenomenon and periodically read and rewrite tracks to “recondition” them. The problem is that the denser the tracks are on the disk, the worse this interference is, and therefore it is necessary to refresh more often. Now it’s supposedly needed as soon asn after a single-digit number of writes to the surrounding tracks (according to WD, it’s sometimes only six writes nowadays). This is starting to be a bottleneck, though, because forcing refreshes this often already carries a significant performance hit. Drives today do these refreshes at the track level, so the entire track has to be overwritten even for very small writes going to just a few sectors.

Even these metadata storing information about where and how long it takes to refresh have to be stored somewhere, and up until now the disks kept it in their relatively small DRAM during operation. And the small available capacity severely limited the granularity with which this information could be stored. This too will be transferred to the added flash storage in the OptiNAND architecture. This will allow information to be held for the purposes of these refreshes with much greater granularity.

Thus, the disk will no longer have to refresh (read and rewrite) the entire affected adjacent track because of one 4KB file, but will only need to refresh the part of it that is actually affected due to being located close to the interference causing write, because the drive will be able to remember the exact addresses of the affected locations. The performance impact of refreshes will be significantly reduced, and this will allow further reduction in track spacing to store more data – thus removing one of the barriers that prevented further track density increases and thus also raising the capacity per platter.

Better performance and reliability

The presence of NAND flash memory in a relatively large capacity also enables better combination of performance and reliability in the OptiNAND hard drives. The fact that reading and writing metadata and a the eliminated part of the track refreshes will not occupy the mechanical/magnetic part of the disk means, of course, that the I/O performance available to the actual user workloads will increase. But what should also help is that UFS storage will allow more data to be stored from buffers in the event of a power failure. When a disk suddenly enters an emergency shut down due to a power failure, it is especially important to save critical metadata. But alongside these, there is also user data waiting in the (volatile) DRAM cache to be written to the disk’s permanent storage.

Because the drive has to constantly take into account that the power can fail at any time, it can never leave that much data in its DRAM. This severely limits its write performance. But OptiNAND drives will be able to store more data in an emergency in the event of a power failure, giving them more room to more aggressively optimize write operations. The biggest difference in performance should occur if the HDD are operated in a mode where the operating system does not use the write cache and all writes are immediately “flushed” to the drive. But even with the write cache active, performance should still improve, albeit less (WD states that with OptiNAND, the performance difference between the state with and without the write cache should be greatly reduced).

According to WD, the drive can save up to 50x the data that was in volatile memory (DRAM) at the time of the problem during a sudden power failure compared to today’s conventional HDD.

Now in the sample phase, deployment to come with 20TB and larger HDDs

The first drives with OptiNAND technology already exist, but are not yet publicly offered on the market. But WD is already sampling to large customers for testing, which would probably be server manufacturers and hyperscalers. These first samples have a capacity of 20 TB and use Energy Assisted Magnetic Recording technology (EAMR or ePMR), they are also explicitly stated to not use SMR. The capacity of a single platter must be somewhere above 2.2 TB, as these are nine-platter helium-filled disks. Whether these drive models will eventually be sold freely is uncertain.

Up to 50TB this decade?

But WD says it will deploy this technology commercially in their successors with capacities of 20 TB and above. In the second half of this decade, it is said that up to 50 TB drives could eventually be productised, and although this will probably be achieved mainly through HAMR recording, OptiNAND is also expected to help in reaching the milestone.

However, if you’ve been following the hardware news for any length of time, you probably know that the bombastic HDD capacity outlooks are more likely to fail than to be met, as can be seen by looking at some of the old roadmaps that now look painfully naive in hindsight. So we wouldn’t be surprised if HDDs this big take much more time to arrive (if they ever will, that is).

English translation and edit by Jozef Dudáš, original text by Jan Olšan, editor for Cnews.cz